The Seattle Times reports Boeing’s Safety Analysis of 737 MAX Flight Control had Crucial Flaws.

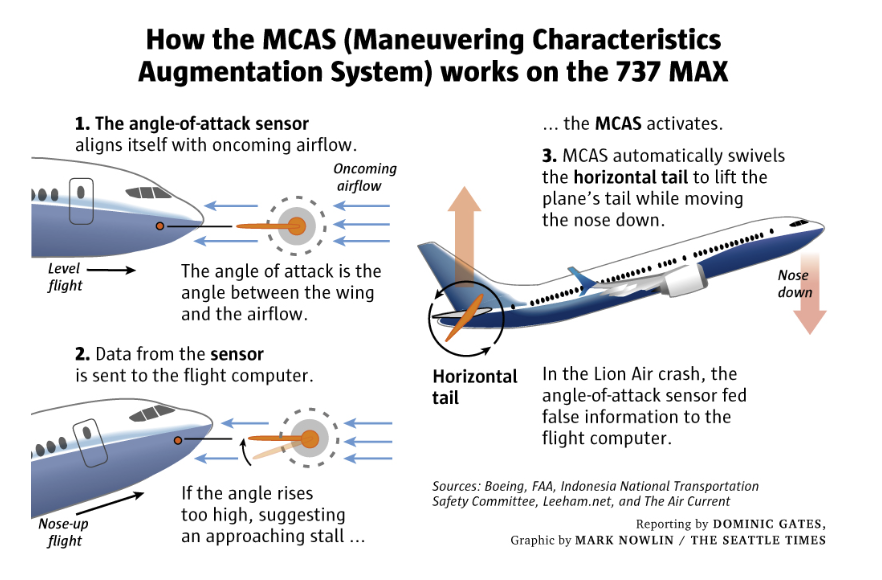

Boeing’s safety analysis of the flight control system called MCAS (Maneuvering Characteristics Augmentation System) understated the power of this system, the Seattle Times said, citing current and former engineers at the U.S. Federal Aviation Administration (FAA).

Last Monday Boeing said it would deploy a software upgrade to the 737 MAX 8, a few hours after the FAA said it would mandate “design changes” in the aircraft by April.

A Boeing spokesman said 737 MAX was certified in accordance with the identical FAA requirements and processes that have governed certification of all previous new airplanes and derivatives. The spokesman said the FAA concluded that MCAS on 737 MAX met all certification and regulatory requirements.

That sketch report isn’t worth commenting on. This Tweet thread I picked up from ZeroHedge is. The thread was by Trevnor Sumner whose brother is a pilot and software enngineer.

Flawed Analysis, Failed Oversight

The Seattle Times now has this update: How Boeing, FAA Certified the Suspect 737 MAX Flight Control System

Federal Aviation Administration managers pushed its engineers to delegate wide responsibility for assessing the safety of the 737 MAX to Boeing itself. But safety engineers familiar with the documents shared details that show the analysis included crucial flaws.

As Boeing hustled in 2015 to catch up to Airbus and certify its new 737 MAX, Federal Aviation Administration (FAA) managers pushed the agency’s safety engineers to delegate safety assessments to Boeing itself, and to speedily approve the resulting analysis.

The safety analysis:

- Understated the power of the new flight control system, which was designed to swivel the horizontal tail to push the nose of the plane down to avert a stall. When the planes later entered service, MCAS was capable of moving the tail more than four times farther than was stated in the initial safety analysis document.

- Failed to account for how the system could reset itself each time a pilot responded, thereby missing the potential impact of the system repeatedly pushing the airplane’s nose downward.

- Assessed a failure of the system as one level below “catastrophic.” But even that “hazardous” danger level should have precluded activation of the system based on input from a single sensor — and yet that’s how it was designed.

The people who spoke to The Seattle Times and shared details of the safety analysis all spoke on condition of anonymity to protect their jobs at the FAA and other aviation organizations.

Black box data retrieved after the Lion Air crash indicates that a single faulty sensor — a vane on the outside of the fuselage that measures the plane’s “angle of attack,” the angle between the airflow and the wing — triggered MCAS multiple times during the deadly flight, initiating a tug of war as the system repeatedly pushed the nose of the plane down and the pilots wrestled with the controls to pull it back up, before the final crash.

The FAA, citing lack of funding and resources, has over the years delegated increasing authority to Boeing to take on more of the work of certifying the safety of its own airplanes.

Comments From Peter Lemme, former Boeing Flight Controls Engineer

- Like all 737s, the MAX actually has two of the sensors, one on each side of the fuselage near the cockpit. But the MCAS was designed to take a reading from only one of them.

- Boeing could have designed the system to compare the readings from the two vanes, which would have indicated if one of them was way off.

- Alternatively, the system could have been designed to check that the angle-of-attack reading was accurate while the plane was taxiing on the ground before takeoff, when the angle of attack should read zero.

- “They could have designed a two-channel system. Or they could have tested the value of angle of attack on the ground,” said Lemme. “I don’t know why they didn’t.”

Short Synopsis

- Boeing 737 Max aircraft have aerodynamic and engineering design flaws

- The sensors that can detect potential problems were not reliable. There are two sensors but the Boeing design only used one of them.

- Boeing cut corners to save money

- To save even more money, Boeing allowed customers to order the planes without warning lights. The planes that crashed didn’t have those warning lights.

- There were pilot training and maintenance log issues.

- Finally, the regulators got into bed with companies they were supposed to regulate

Trump was quick to blame software, calling the planes too complicated to fly.

If the above analysis by Trevor Sumner is correct, the planes were too complicated to fly because Boeing cut corners to save money, then did not even have the decency to deliver them with needed warning lights and operation instructions.

There may be grounds for a criminal investigation here, not just civil.

Regardless, Boeing’s decision to appeal to Trump to not ground the planes is morally reprehensible at best. Trump made the right call on this one, grounding the planes, albeit under international pressure.

Expect a Whitewash

Boeing will defend its decision. The FAA will whitewash the whole shebang to avoid implicating itself for certifying design flaws.

By the way, if the timelines presented are correct, the FAA got in bed with Boeing, under Obama.

Mike “Mish” Shedlock

this is only the end of the story , all started here

There was one more problem too. When the plane is in a badly out of trim state, the aerodynamic forces on the stabilizer are large enough that the backup manual trim system requires superhuman strength to use. This is a latent problem that goes back to the 707. There were some procedural workarounds for this, but they stopped training pilots on them because no one ever used them because everyone knew when you fly a 737 you have to stay on top of the trim and never get too far out of trim. Then a new system is installed that has a new failure mode that can leave you in a badly out of trim state.

I would say this is a systems engineering/regulatory oversight failure to allow a backup system that doesn’t work well under the conditions when you need it most.

Ok, I am a software engineer from MIT. And I write software to control planet-scale systems of great complexity. If the Boeing systems behaved as described in the media, there was a big problem with both the software and the MCAS system design. It looks like Boeing is trying to address some of these, but not others.

Rule 1. Any software system will fail.

Rule 2. Do not allow a failing system to blindly keep reasserting its output. I.e. always build a feedback system to check whether the expectations of the system’s behavior are what is actually happening and stand down if not.

Rule 3. People are usually better equipped to deal with ongoing unexpected problems. I.e. computer is faster to react at first, but people make better decisions in the longer term. Stand down immediately if a person is asserting control. (At some point, there were mechanical interlocks that would snap off if computer’s controls were contrary to pilots’ controls; what happened to them?)

Rule 4. Be very careful/afraid of software control oscillations. I.e. software is very often designed with “If something is wrong, retry” logic on many layers. That includes, “if some error happened, restart the software system”. These trends to cause the same bad thing to keep happening. Count the number of “retries” and very aggressively force the software to stand down (or first go into “safe” mode) when any indication of repeated retries for an unknown reason are seen.

Well, here are some ideas, free for the taking, but they come with a bunch

of difficult questions:

As an investigator, I’d ask all these questions, and more:

Focusing on “The Airflow”, which moves towards the 737 aircraft’s sensors, if such airflow is being pushed up by fast rising air masses (winds, thermal updrifts, etc.), could it trick the MCAS into believing that it is encountering an approaching stall, when in fact there is none? For example, say, you fly at a level flight, at a reasonable speed, over a desert. It’s super hot today, 120 degrees in Ethiopia, and the heated air of the desert rises very fast, faster than normal.

QU: Does this airflow consisting of fast rising super heated desert air make MCAS believe that the “nose is up”, because the data suggest that the angle of attack is too high?

The same scenario could happen over a very heated ocean, which produces fast rising humid air masses. Is this being considered in MCAS? Has MCAS been tested under these conditions? Seattle is usually cool, rainy, maybe foggy, but it rarely if ever encounters fast rising hot, dry or humid air masses. So, where did MCAS get tested?

What are the assumptions? Does the MCAS assume that the oncoming

airflow is always “parallel to the level flight horizon”? How does it know?

We all know that a pilot flying blind, in darkness, in clouds, in fog must rely

on the instruments. If the instruments provide erroneous data, the pilot is

either lucky or dead. You know, the I’d rather be lucky than right deal!

Any instrument that takes over for the pilot must be getting all the

correct data. What are the input data into MCAS, and what are the

possible output data, under all possible circumstances?

Does MCAS get GPS data? Temperature data? Weather? Wind? Surely it

must get data that tells it the most recent speed, the current cruising height, the height the plane is supposed to be on according to flight plan,

the recent increase or decrease in height over sea level, the most recent changes, and most likely upcoming changes in surface area height? Right? So, how much AI is there?

What happens if one of these data items is wrong or unavailable?

What are the fallback provisions?

Where’s the point when the MCAS shuts down and says to the pilot:

“You are on your own, because of conflicting, unreliable, unavailable data?”

Is there such a point?

(In the movie “Airplane”, this is the point when the warning light named “Strange” starts blinking, causing the pilot to say “Hmmm, that’s strange!”)

(Plus, yeah, sorry, if I cannot make fun of terrible accidents and important things, then I am not using my imagination to the fullest. So yada yada!”)

Can the pilot switch the system off if it is clearly malfunctioning?

All that comes to mind, just thinking, ranting, raving about the issue.

Sometimes really obvious things are not even considered, just like fish no longer are aware of the water. I am asking because I don’t know.

Rule 1: “If you don’t know, you have to ask!”

Rule 2: “If you don’t know that you don’t know, you can’t even know to ask!”

On more thing.

In my experience, in such important “parts” systems should be highly redundant, as redundant should be also sensors and the commands to abort automatic functions.

I think that there could be also other misdesign problems: for instance, the recent German pilot suicide could have originated some actual automated “protections” against human interventions during standard phases of a fly, avoiding that an automatic procedure could be aborted by manual instructions from the pilots.

That’s why a pilot with only 200 hours was given such a responsability.

Yes, single points of failure must be avoided. Hence critical sensors must be redundant.

As for the distrust of the pilot, this is a wrong decision, if that was the decision. A malicious pilot has many ways to doom the plane. In any case, a pilot is more likely to save a damaged plane than to destroy a working one. Warnings and suggestions from a computer are welcome; asserting its decisions over the human ones is not.

Great we have the average Joe commenting on air frame design and advanced engineering.

Oh Brother! Everyone’s an average Joe, until they accidentally find something that’s been overlooked. I’d rather reinvent the wheel a couple times, instead of not inventing it enough times. Likewise, 4 eyes see more than 2, and 20 eyes see more than 4.

Especially, when it comes to software, how often is the software engineer

lacking in imagination? Way too often! Lack of imagination – in software – leads to – unanticipated problems, unexpected situations, unrealized proper responses to situations. All for a lack of imagination!

I don’t know about you, but the number of times I would like to (not literally) strangle the software engineers of Microsoft for providing unworkable, faulty, creepy, inefficient, borderline retarded software is

in the 1000’s. Their latest accomplishment is to make “Copy and Paste” error-prone. Yes, Ctrl C and Ctrl V, something that worked for the last 30 years, the peeps at MSFT made it so, that it sometimes doesn’t work.

Of course, Microsoft Office is not deadly, just annoying. How lucky are we

that MSFT standards are not employed at “mission critical” systems.

Or at least, we should be so lucky!

To remedy some of these problems, I suggest that software engineers

be taken away from their computer screens every so often, to play chess,

climb mountains, fight bears, observe nature, breathe real air, read a book, pray a sandwich, eat philosophy, ride a dog and play with a horse..

etc… in order to widen their horizons.

Sorry, if I can’t vent or rant, the that whole free speech thing isn’t working,

so forgive any unintended annoyances.

Boeing 737 Max needs to go back to the drawing boards.

It is scary to think that Boeing tried to correct a major design flaw by letting a software triggered mechanism (MCAS) to take control and lower the nose when the angle of attack exceeds the limit.

And now it is the more shocking to learn that Boeing looks to upgrade the software instead of overcoming those fundamental design flaws which was the primary reason to introduce MCAS first.

Stall prevention software should ideally come into action only at high altitudes to overcome a pilot’s instinctive reaction to pull up instead of lowering the nose while experiencing an aerodynamic stall.

Risky maneuvers like those activated by MCAS should never be meant to be used at low altitudes from where recovery would be impossible even if the pilot deactivates the system.

haven’t actually seen a picture of the plane, just empty ground and pictures of other planes that are not the 737 max. a little suspicious….

The explanation seems plausible as the plane went on a roller coaster ride prior to crashing.

“the regulators got into bed with companies they were supposed to regulate”

Part of a regulator’s job description.

That FAA Officer is Ali Bahrami.

Such act in most countries is bribery!

()

moonofalabama.org/2019/03/boeing-the-faa-and-why-two-737-max-planes-crashed.html

Read pretty much the same analysis 5 days ago (

Boeing (which is in fact subsidized by a hose from military spending) wanted to compete with China and Airbus without designing a new air frame. The more it sinks in that it is the air frame & engines itself which demands software and other correcting issues, the less people are going to want to buy it.

Why does the 737 Max require a MCAS system ? Does a 747 require a MCAS system? Not that I know of. 757? 767? 777? 787? Is this system on any of these? What is so wrong with this airplane that it requires this system?

What’s wrong is that the engines are too big, too heavy and too far forward on the plane, so that it sort of destabilizes the plane…

Seems hard to believe they were pinching pennies on an inconsequential piece of equipment cost wise. Many parts missing along with a lot of wild speculation. Patience is required for the truth.

Don’t think so, Mish. Too many smart people touched this Engineering Change. If I had one guess, it was agreed to test with the one sensor configuration as a time saver and the dual sensor configuration phase-in got lost somewhere in the shuffle between Alpha/Beta testing and final, approved EC release.

I wonder if FAA has ever tested the case when the sensor malfunctions and that the recovery remedy is adequate. In addition the case where there isn’t sufficient height clearance to engage MACS (or the plane crashes!) Someone failed BIG TIME and we now have two downed planes.

Moving the (bigger) engines forward created some new dynamics for the plane. The vertical pivot point b/tn the engines (power) vs the wings (lift) moved forward; likely the center of gravity as well. Either, much less both, would have created different handling characteristics. Taking those dynamics forward, and trying to offset it at the back via the tail would add to a more unstable dynamic.

Since Boeing was penching pennies I wonder if in the fullness of time the MCAS was designed based upon the old airframe’s behavior, when that model needed to change to accomidate the new pivot & balance characteristics.

“Every experienced pilot once had only 200 hours of experience.”

Correct – We do not know if there was a training issue or not. But every pilot starts with 0 hours of passenger flight hours.

Every pilot starts with 0 hours of passenger flight hours, but they don’t start as First Officer.

Right! And what does this button do? Ooops, kabooom!

The regulatory state is doomed to fail because it has a design flaw: the best judgment of individuals is replaced by calculations regarding government guns. But just watch how there will be calls for more regulations.

That’s crazy talk!

MCAS = Self-Piloting Plane. What could go wrong? Especially if they thrift the sensors.

Apparently in the Lion Air crash, the First Officer had less than 200 hours of flight experience. To be flying a plane as complicated as the 737 Max with so little flight experience is completely insane.

Every experienced pilot once had only 200 hours of experience.

Not in an extremely complicated new type of aircraft like this.

Not sure if I heard correctly, but thought it had been said that the first officer had only 200 hours experience in B737-Max. 200 hrs. of total flying experience sounds absurd to qualify a pilot for commercial airline service.

Well, I can talk smart, but I still have only 0 hours of pilot flight experience.

Are you suggesting pilot error? What basis?

A sensor apparently failed, the plane was not equipped with a redundant sensor and the pilot did not know what to do. It’s a combination of both.

Neither of us know all information. But as best as I can determine MCAS over road pilot actions, and the was no training or warning system (purchasable as an option) to tell pilots MCAS was malfunctioning and should be turned off. How is that pilot error? How does the inexperience of the copilot play into this.